Sequence‑to‑sequence (Seq2Seq) models are a common type of neural network used in tasks where one sequence is transformed into another, such as machine translation, speech recognition, and image captioning. A typical Seq2Seq model is composed of two parts: an encoder and a decoder.

Seq2Seq Models Without Attention

In a traditional Seq2Seq model without attention, the encoder reads the entire input sequence (for example, a sentence in English or Chinese) and compresses all the information into a single fixed‑length vector, often called the context vector. This vector is then passed to the decoder, which generates the output sequence (for example, a sentence in Spanish).

This approach has several limitations:

- The information bottleneck is a major problem. All the input information must fit into one vector.

- For long input sequences, important information, especially from the beginning of the sequence, can be lost.

- While the decoder is generating the final output tokens, it may have forgotten parts of the input sequence.

These difficulties motivated the introduction of Attention mechanisms.

What Is Attention?

Attention allows the decoder to look back at the encoder outputs instead of relying on a single context vector. Rather than compressing the entire input into one vector, the encoder produces a sequence of hidden states, and the decoder can access all of them.

This brings several advantages:

- Better performance on long sentences.

- Reduced loss of long‑term information.

- Easier training due to shorter gradient paths.

- The ability to visualize alignments between input and output words.

How Attention Works: Queries, Keys, and Values

Attention is computed using three main components:

- Queries (Q): come from the decoder (what we are looking for).

- Keys (K): come from the encoder outputs (what we compare against).

- Values (V): also come from the encoder outputs (the information we want to combine).

The process works as follows:

- We compute attention scores by comparing the queries with the keys.

- A Softmax function converts these scores into an attention distribution (weights).

- The final attention output (also called the context vector) is computed as a weighted sum of the values.

Importantly:

- Queries interact with keys, not values.

- The output is a weighted sum of values, not queries.

Types of Attention

Additive (Concat) Attention

Also known as Bahdanau Attention, this method:

- Concatenates the query and key.

- Uses a small feed‑forward neural network (MLP).

- Computes attention scores in an additive way.

Despite using an MLP, this type of attention is not multiplicative.

Multiplicative (Dot‑Product) Attention

Also known as Luong Attention, this method:

- Uses a dot product (or a bilinear form) between queries and keys.

- Is computationally simpler and faster.

- Is commonly used in Transformer models.

Because Additive Attention does not use dot products between queries and keys, it cannot be called multiplicative.

Attention in Image Captioning

In image captioning systems:

- The encoder is a CNN, which extracts visual features from the image.

- These CNN features act as keys and values.

- The decoder is an RNN, which generates the caption.

- The decoder hidden state acts as the query.

At each step, the decoder attends to different parts of the image, allowing the model to focus on relevant regions while generating each word.

Conclusion

Attention mechanisms solve the main limitations of traditional Seq2Seq models by removing the fixed‑size bottleneck and allowing the decoder to dynamically access the entire input. By using queries, keys, and values, attention improves performance, interpretability, and training stability across many tasks, including machine translation and image captioning.

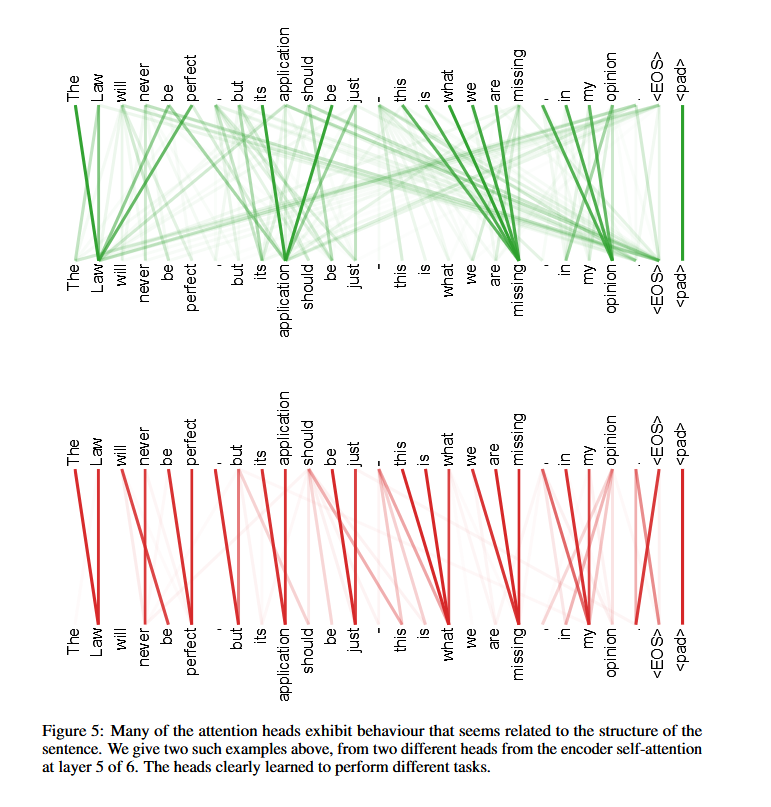

© Image. Attention is All You Need

References:

- Attention Is All You Need reference.org

- «Attention Is All You Need» on Google Research

- «Attention Is All You Need» on arXiv

- Uszkoreit, Jakob (31 August 2017). «Transformer: A Novel Neural Network Architecture for Language Understanding». research.google. Retrieved 9 August 2024. A concurrent blog post on the Google Research blog.

Bonus:

Remember:

You Are What You Have Read

Borges quote: “Uno no es lo que es por lo que escribe, sino por lo que ha leído.”

@Yolanda Muriel

@Yolanda Muriel