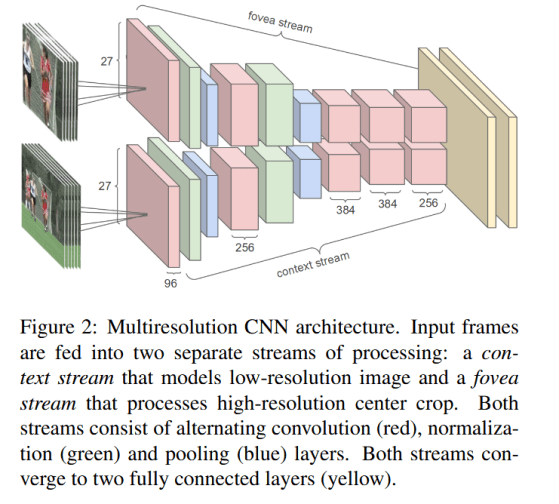

Large-scale video classification with convolutional Neural Networks. This article is about: CNNs class of models for image recognition problems. Dataset…

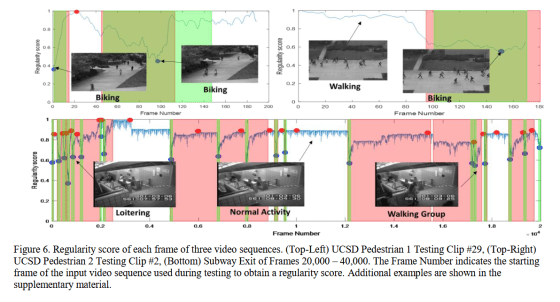

ARTIFICIAL INTELLIGENCE (55) – Computer vision (9) – Integrating CNNs, RNNs, and Efficient Design for Video Processing

Introduction Video understanding has become a central problem in computer vision, requiring models that can process both spatial information (what…

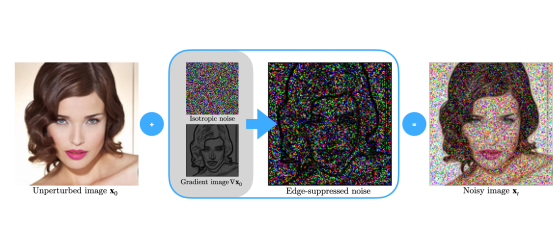

ARTIFICIAL INTELLIGENCE (54) – Computer vision (8) – Understanding Noise Scheduling and Noise Shapes in Diffusion Models

Diffusion models, particularly Denoising Diffusion Probabilistic Models (DDPMs), have become a powerful framework for generative modeling. Two key components that…

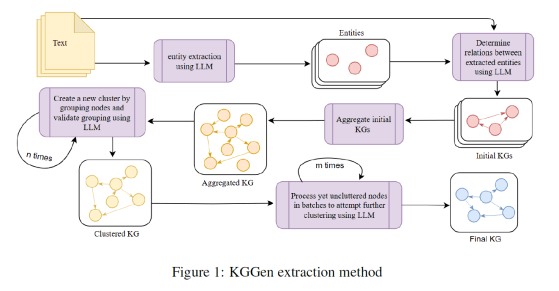

ARTIFICIAL INTELLIGENCE (53) – Natural Language Processing (24) – Knowledge Graphs KGGen

Knowledge Graphs (KGs) are made up of subject-predicate-object triples and have become an essential structure for retrieving information. Most real-world…

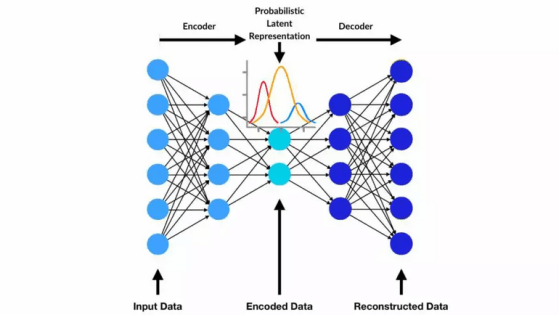

ARTIFICIAL INTELLIGENCE (52) – Computer vision (7) – Understanding Variational Autoencoders: Learning to Generate, Not Just Reconstruct

Variational Autoencoders (VAEs) represent a powerful class of generative models that go beyond traditional neural networks designed purely for reconstruction…

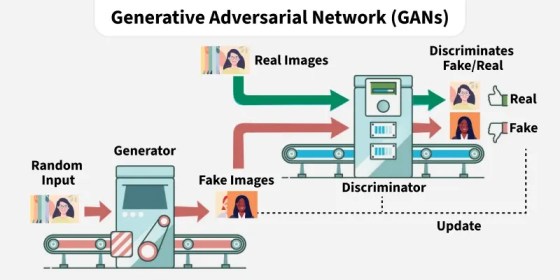

ARTIFICIAL INTELLIGENCE (51) – Computer vision (6) – Adversarial Learning in GANs: Structure, Representations, and Loss Design

Generative Adversarial Networks (GANs) are built upon a dynamic interaction between two neural networks: a generator and a discriminator. The…

Debe estar conectado para enviar un comentario.