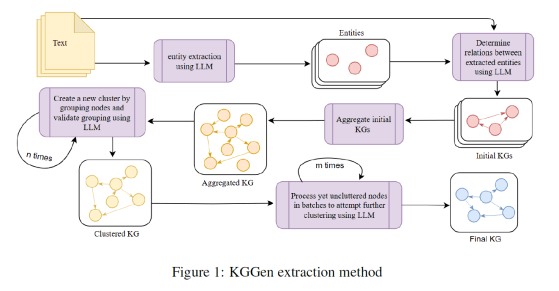

Knowledge Graphs (KGs) are made up of subject-predicate-object triples and have become an essential structure for retrieving information. Most real-world … Más

Autor: Yolanda MURIEL

BIM Architect, Building engineer

Barcelona, Spain

ARTIFICIAL INTELLIGENCE (52) – Computer vision (7) – Understanding Variational Autoencoders: Learning to Generate, Not Just Reconstruct

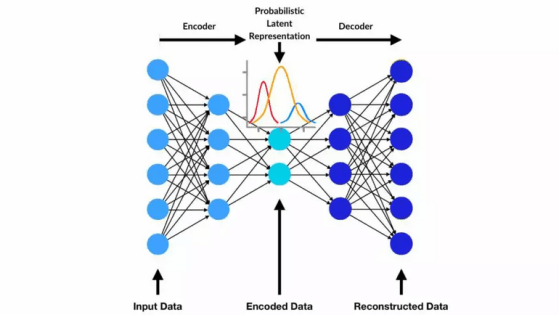

Variational Autoencoders (VAEs) represent a powerful class of generative models that go beyond traditional neural networks designed purely for reconstruction … Más

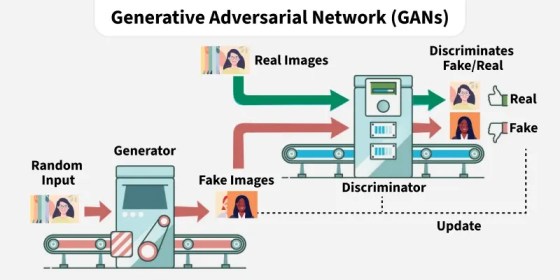

ARTIFICIAL INTELLIGENCE (51) – Computer vision (6) – Adversarial Learning in GANs: Structure, Representations, and Loss Design

Generative Adversarial Networks (GANs) are built upon a dynamic interaction between two neural networks: a generator and a discriminator. The … Más

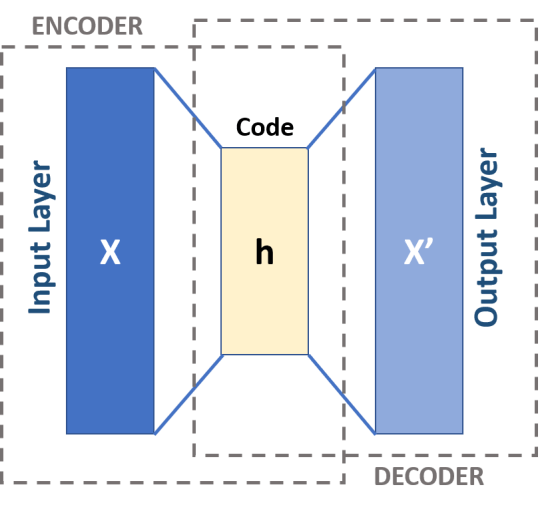

ARTIFICIAL INTELLIGENCE (50) – Computer vision (5) – Autoencoder, Variational Autoencoders (VAEs) and Diffusion models

When training an autoencoder, the objective is for the model to reconstruct its own input. For this reason, the target … Más

ARTIFICIAL INTELLIGENCE (49) – Computer vision (4) Transfer Learning

The article explains the concept of Transfer Learning in machine learning. Instead of training a deep neural network from the … Más

ARTIFICIAL INTELLIGENCE (48) – Natural Language Processing (23) Regular Fine-Tuning and LoRA Fine-Tuning

Regular Fine‑Tuning In standard fine‑tuning: The model starts with pretrained weights W, during training, the model learns a full weight … Más

Debe estar conectado para enviar un comentario.