Transformers are one of the most influential neural network architectures in modern artificial intelligence. Although they were originally introduced for language-related tasks, their design is highly general. Because of this, transformers can be applied to any application in which data can be represented as a sequence or a set of elements.

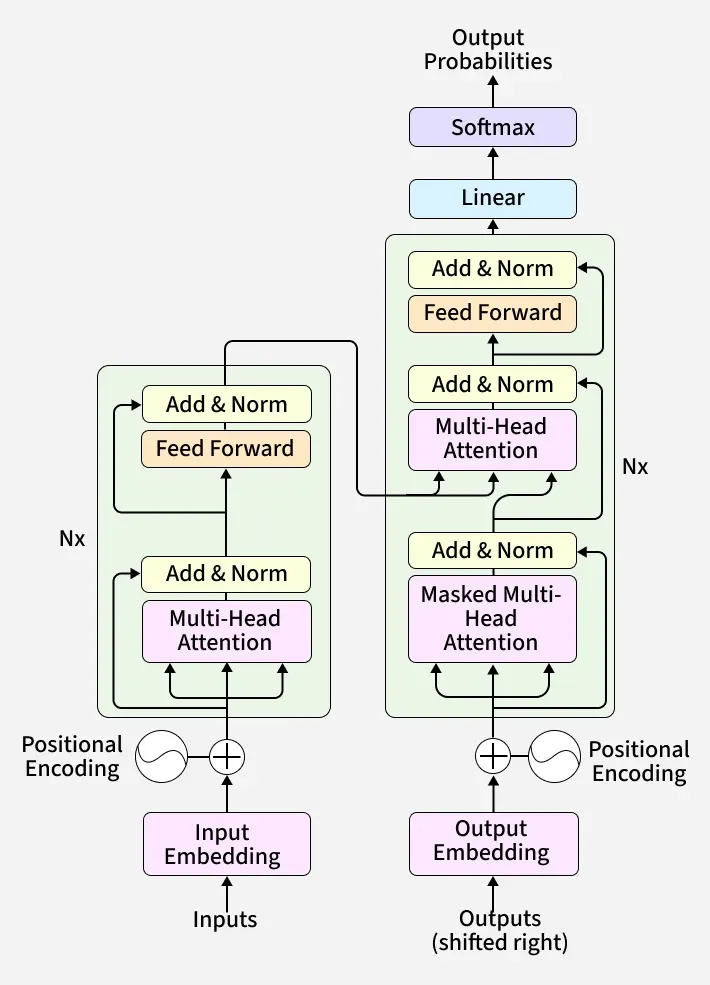

The key idea behind transformers is the attention mechanism, which allows the model to examine relationships between all elements of the input at the same time. Unlike recurrent or convolutional models, transformers do not assume a specific order or locality. Instead, they learn relationships directly from the data, making them extremely flexible.

In language applications, transformers are used for tasks such as machine translation, text generation, summarization, and question answering. Models like GPT rely on self-attention to understand context and long-range dependencies between words. These capabilities have set new performance standards in natural language processing.

Transformers have also been successfully applied to computer vision. In Vision Transformers (ViT), images are divided into patches and treated as sequences, similar to words in a sentence. This shows that transformers are not limited to text, but can also process visual data effectively when it is properly formatted.

Beyond language and vision, transformers are used in many other domains. In speech and audio processing, they are applied to automatic speech recognition and music generation. In time-series analysis, transformers are used for forecasting, anomaly detection, and modeling sequential sensor data. They are also increasingly important in biology, where they help analyze DNA sequences, protein structures, and molecular interactions.

The reason transformers can be applied to any application is that they are domain-agnostic. They do not rely on hand-crafted assumptions about the structure of the data. As long as the input can be represented numerically and organized as a sequence or a set, a transformer model can learn meaningful patterns from it.

In conclusion, while transformers are most commonly associated with language and vision, their true strength lies in their universality.

@Yolanda Muriel

@Yolanda Muriel