- The network makes a prediction.

- It compares the prediction to the correct answer and measures how wrong it was.

- Backpropagation then adjusts the network’s internal settings (weights) to reduce this error.

- This process is repeated many times until the network becomes accurate.

When a network has several output units, the total error is the sum of the errors of all those outputs. This total error is what the network tries to minimize during training.

Backpropagation uses gradient descent, which is a mathematical method for gradually adjusting the weights to make the error smaller and smaller. You can imagine it like finding the lowest point in a valley by always stepping downward.

In short:

Backpropagation helps a neural network learn by measuring its mistakes and adjusting itself to improve.

E(w) = (1/2) * Σ_{d ∈ D} Σ_{k ∈ outputs} (t_kd – o_kd)^2

This formula represents the total error made by a neural network when it tries to predict results.

Let’s break it down:

1. E(w)

This is the total error of the network.

It depends on the weights w, which are the values the network adjusts during learning.

2. (1/2)

This 1/2 is added to make later calculations (derivatives) easier.

3. Σ_{d ∈ D}

This means we sum over all training examples.

Each example is labeled d.

4. Σ_{k ∈ outputs}

For each training example, we also sum the error for each output unit of the network.

5. (t_kd – o_kd)^2

This is the squared difference between:

- t_kd → the target (correct answer) for output k

- o_kd → the network’s prediction for output k

Squaring ensures all errors are positive and gives more weight to larger mistakes.

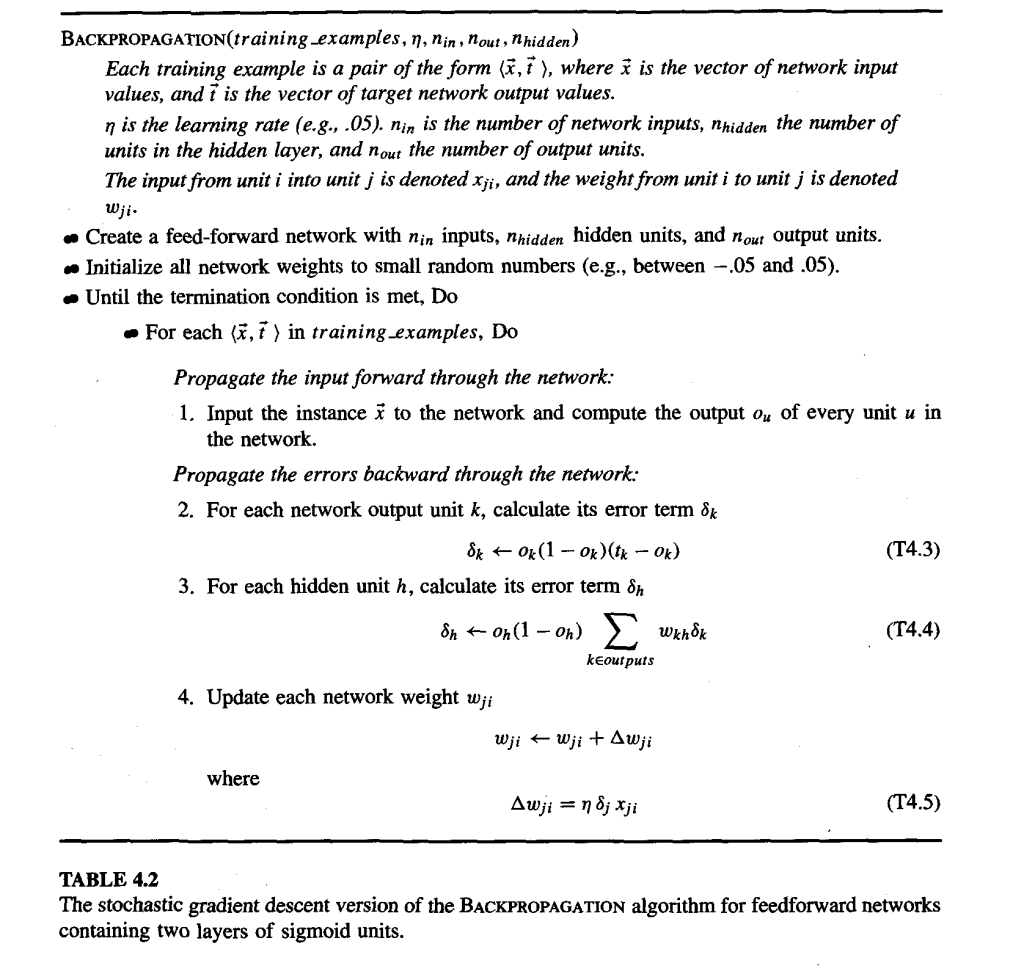

What the Backpropagation Algorithm Does

Backpropagation trains a neural network by:

- Sending inputs forward through the network to produce outputs.

- Comparing the outputs to the correct answers.

- Sending the error backward to update each weight and improve the network.

This process repeats for every training example until the network learns.

© Image. Tom M.Mitchell

@Yolanda Muriel

@Yolanda Muriel