PDFs are a particularly challenging source for NLP because they are not designed as structured, machine-friendly data formats. A PDF can contain anything from clean digital text to scanned images or complex multi-column layouts. Because of that, trying to build NLP systems directly on top of raw PDFs often leads to unnecessary complexity and fragile pipelines.

To address this, the most important step is to extract structure as early as possible. Instead of feeding PDFs directly into machine learning models, the content should first be converted into a structured representation, such as clean text or JSON-like data. Once the information is in this format, it can be treated like any other dataset and processed using standard NLP techniques.

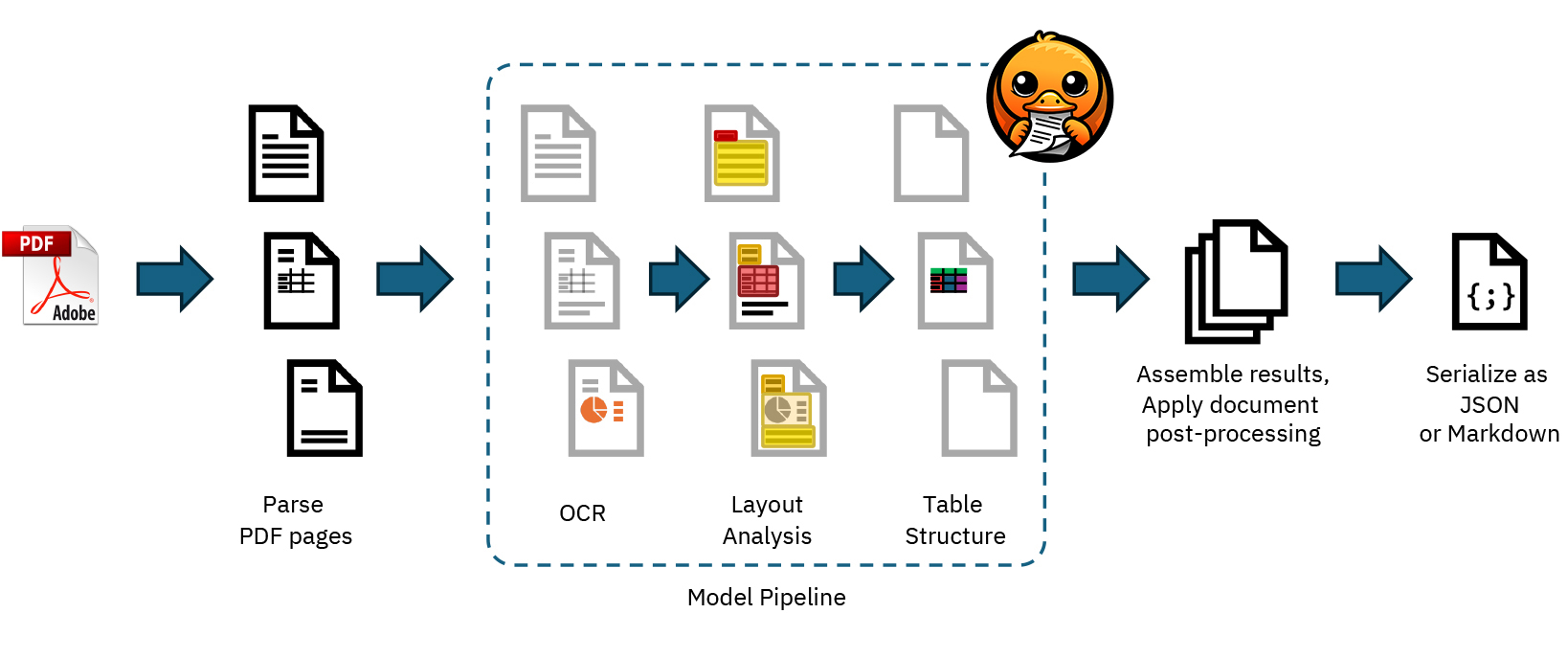

Building monolithic pipelines that attempt to handle everything at once—such as OCR, layout understanding, information extraction, and downstream NLP tasks—is inefficient and hard to maintain. A better approach is to design modular pipelines where each step has a clear responsibility, such as parsing the PDF, analyzing layout, extracting text, and then applying tasks like named entity recognition or classification. This makes systems easier to debug, improve, and scale.

We should take into account a workflow that combines tools for document processing with NLP frameworks, allowing the extracted content to be represented in structured objects that can be easily enriched with linguistic annotations. While layout and tables can provide useful signals, relying too heavily on them can reduce how well models generalize, so their use should be carefully considered depending on the task.

Creating high-quality labeled data is essential, and breaking the problem into smaller, well-defined steps makes annotation more efficient and consistent. Human-in-the-loop approaches are therefore a key part of building reliable systems.

Modern large language models and vision-language models can simplify some aspects of working with PDFs, but they are not a complete solution. Treating PDFs as just another input to an LLM ignores the underlying structural challenges. Proper preprocessing and thoughtful pipeline design remain crucial.

In conclusion, PDFs should not be treated as the final input for NLP systems. Instead, they should be transformed into structured, machine-readable data first, and only then used in modular and well-designed NLP workflows.

Image. The Docling architecture for PDF processing (Auer et al., 2024)

References:

From PDFs to AI-ready structured data: a deep dive

spaCy Layout: Process PDFs, Word documents and more with spaCy

Docling: Library and models for parsing and converting document formats

@Yolanda Muriel

@Yolanda Muriel