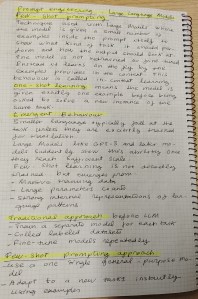

Few-shot prompting is a technique used with large language models where the model is given a small number of examples inside the prompt itself to show what kind of task it should perform and how the output should look. Importantly, the model is not retrained or fine-tuned. Instead, it learns on the fly from the examples provided in the context. This behavior is often called in-context learning.

Emergent few-shot learning highlights that this ability of modelsl was not explicitly programmed, but rather emerged naturally as models became larger and more capable.

One-Shot Prompting Example

One-shot learning means the model is given exactly one example before being asked to solve a new instance of the same task. This is a special case of few-shot prompting.

The example

The prompt reads:

Translate English to French:

sea otter => loutre de mer

cheese =>

How this works:

- The instruction “Translate English to French” defines the task.

- The single example:Input: “sea otter«, Output: “loutre de mer”

- From this, the model infers: The task is translation, and the format is “English word => French translation”.

- When it sees:

cheese =>the model completes it correctly (e.g., fromage).

This demonstrates that a single example is enough for a large language model to generalize the pattern and apply it correctly.

©Image. Abhishek Bose

Emergent Behavior

Smaller language models typically fail at this task of translation unless they are explicitly trained for translation. But Large models (like GPT-3 and later models) suddenly show this ability once they reach sufficient scale.

This suggests that few-shot learning is not directly trained, but emerges from:

- Massive training data

- Large parameter counts

- Strong internal representations of language patterns

Graph: In-Context Learning on SuperGLUE

GPT-3, without fine-tuning, can match or exceed traditionally fine-tuned models simply by being given examples in the prompt. However, it still does not reach human-level performance, even with many examples.

A major shift in how machine learning systems are used:

Traditional approach (before large LLMs)

- Train a separate model for each task

- Collect labeled datasets

- Fine-tune models repeatedly

Few-shot prompting approach

- Use one single general-purpose model

- Adapt it to new tasks instantly using examples

- No retraining required

This greatly reduces:

- Development cost

- Time to deployment

- Need for labeled datasets

In summary:

- Few-shot prompting allows large language models to learn new tasks from just a few examples.

- One-shot prompting is enough for simple tasks like translation.

- This learning happens inside the prompt, not through retraining.

- Performance improves as more examples are added.

- Large models like GPT-3 demonstrate emergent intelligence, rivaling fine-tuned systems.

- This capability fundamentally changes how AI systems are designed and used.

Bonus

Write down your ideas on paper

@Yolanda Muriel

@Yolanda Muriel