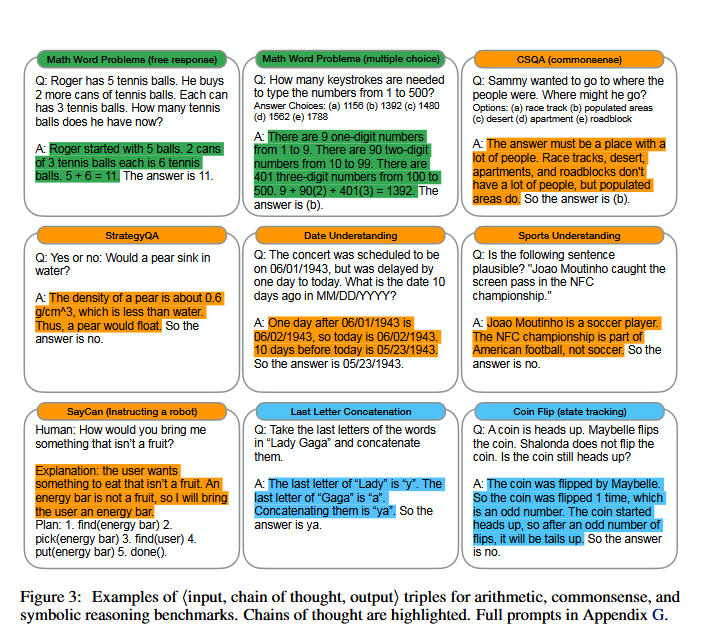

Chain-of-thought prompting is a prompting technique in which the model is encouraged to explicitly reason step by step before producing a final answer. Instead of giving only the final result, the model explains how it arrives at the solution, breaking the problem down into intermediate reasoning steps. This approach is especially effective for: Arithmetic word problems, Logical reasoning tasks, Multi-step decision-making , and Symbolic reasoning

The main goal of chain-of-thought prompting is to: Improve accuracy on complex problems, reduce reasoning errors and help the model generalize its reasoning process to similar problems. By seeing examples of structured reasoning, the model learns how to think, not just what answer to give.

Standard Prompting

In standard prompting:

- The model is asked a question.

- It provides a short answer without explaining the steps.

Example:

Question:

Roger has 5 tennis balls. He buys 2 more cans of tennis balls.

Each can has 3 tennis balls.

How many tennis balls does he have now?

Answer:

The answer is 11.

This is correct, but no reasoning is shown.

The Problem with Standard Prompting is: When a new but similar question is asked:

The cafeteria had 23 apples.

They used 20 to make lunch and bought 6 more.

How many apples do they have?

The model answers:

27 (incorrect)

Why this happens:

- The model jumps directly to a conclusion.

- There is no intermediate reasoning to guide the calculation.

- The model mixes up addition and subtraction steps.

Chain-of-Thought Prompting

In this approach, the model is given an example answer that includes step-by-step reasoning.

Example:

Answer with reasoning:

Roger started with 5 balls.

2 cans of 3 tennis balls each is 6 tennis balls.

5 + 6 = 11.

The answer is 11.

This teaches the model How to identify relevant quantities, How to combine them in the correct order, and How to explain the reasoning clearly.

Improved Performance on a New Question

When the second problem is asked again using chain-of-thought prompting, the model outputs:

Reasoned Answer:

The cafeteria had 23 apples originally.

They used 20, so 23 − 20 = 3 apples left.

They bought 6 more, so 3 + 6 = 9.

The answer is 9.

Why this works better:

- Each step is explicit.

- The model performs subtraction first, then addition.

- Errors are much less likely.

To summarize:

- Standard prompting often fails on multi-step reasoning tasks.

- Chain-of-thought prompting significantly improves correctness.

- Providing reasoning examples teaches the model how to reason, not just what to answer.

© Image. Chain-of-Thought Prompting Elicits Reasoning in Large Language Models

Why Chain-of-Thought Is Important

Chain-of-thought prompting is a major advancement because it: Enables better reasoning without fine-tuning, improves performance on tasks that resemble human problem-solving and makes large language models more reliable and consistent.

This technique plays a crucial role in:

- Advanced AI assistants.

- Educational tools.

- Decision support systems.

- Complex analytical tasks.

In short:

- Chain-of-thought prompting encourages step-by-step reasoning.

- It reduces logical mistakes in complex problems.

- This technique unlocks more powerful and reliable reasoning behavior in large language models.

Bonus

Write down on paper your ideas.

@Yolanda Muriel

@Yolanda Muriel